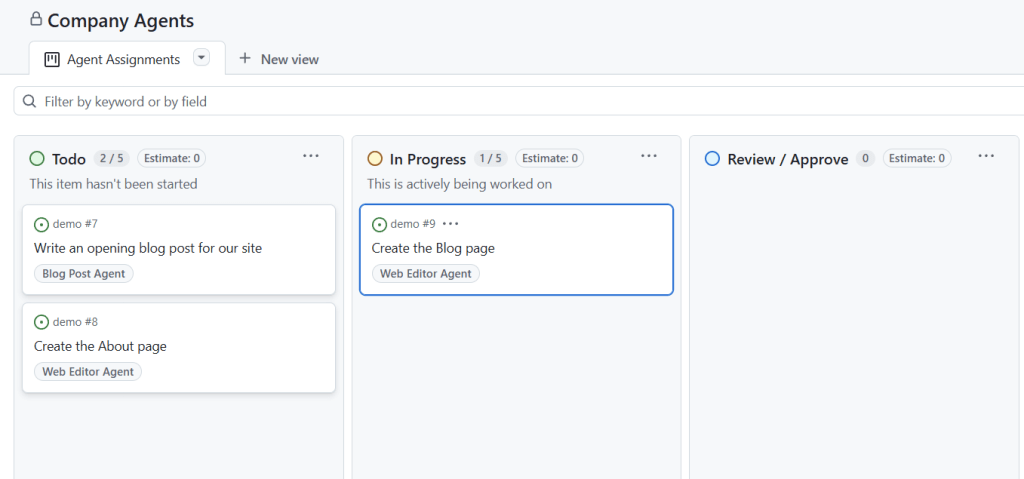

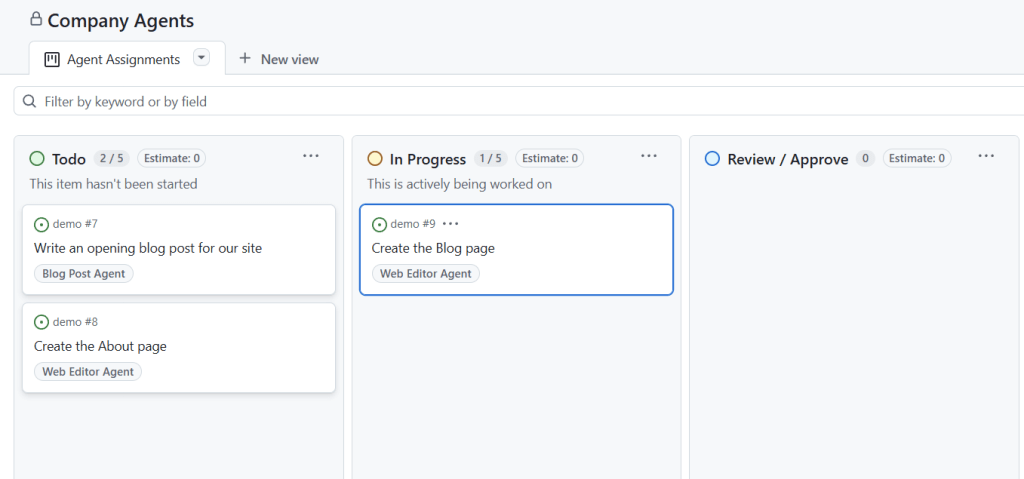

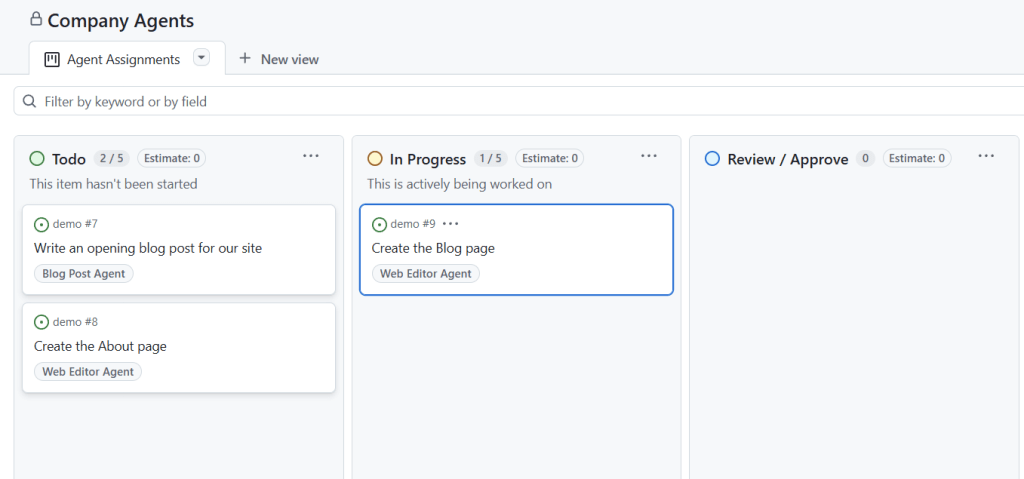

Todo board for Company Agents

As developers, one of the most effective ways to grow and improve is by reading code written by others. This practice is often overlooked, but it is a powerful method for learning what makes code readable, understandable, and well-structured.

Start by picking small and manageable examples of code. Focus on reviewing code created by others, whether it’s from open-source projects, libraries, or colleagues. Work through the code, line by line, and aim to understand its functionality. Don’t worry if you don’t grasp everything right away—this process is about learning to decode unfamiliar approaches and picking up insights along the way.

Pay attention to the moments when you understand the code easily. What about the names, comments, structure, or logic made it so? Similarly, notice what feels difficult or unclear. This reflection is key to identifying which practices make the code accessible and which may hinder comprehension.

Reading and reflecting on others’ code allows you to develop a deeper awareness of what makes code readable and maintainable. Learn from the clarity you encounter and use those lessons to guide your own coding style. Strive to write code that is easy to understand—not only for your colleagues but for yourself when revisiting your work weeks or months later.

Finally, make code reading a regular habit. The more you expose yourself to different styles and approaches, the better you’ll become at adapting and improving your own practices. Learning from others is an ongoing process, one that can help you grow immensely over time.

Language models have proven themselves as masters in creating text that seems polished and professional. They are extraordinarily good at appearing competent in their outputs, crafting persuasive responses, and even taking on roles within creative or professional scenarios like actors in a play. These qualities make them incredibly versatile tools for tasks such as storytelling, content creation, and communication.

However, much of their strength lies in their ability to imitate. Language models excel at mimicking reality, generating responses that can feel authentic and convincing. Yet, this skill of “pretending” can be deceptive. While their outputs can seem well-informed, it’s essential to remember that they lack genuine understanding. They generate text based on patterns learned from vast datasets, and their confidence can mask a lack of true comprehension.

This ability to imitate creates a potential pitfall. When relying too much on outputs from these tools, it’s easy to let personal beliefs, hopes, and expectations interfere with judgment. If users treat these suggestions as truths or infallible answers, there’s a risk of misusing them, whether in critical decision-making or emotional reasoning. Humans may inadvertently project their own intentions onto the tool and end up in a trap of unwarranted trust.

The key to avoiding these risks is approaching language models with a critical mindset. What they produce should be seen as helpful hints, not unquestionable facts. Fact-check their results, consult other resources, and always pair their outputs with human expertise. Their value lies in how we choose to use them—when complemented with careful analysis and application, they can be truly transformative tools.

Language models are powerful but are not substitutes for human understanding. Their role is best appreciated when we recognize their strengths as well as their inherent limitations, ensuring that our use of them remains purposeful, thoughtful, and effective.

When you ask a question in an app, it might feel like you’re interacting with an expert who knows everything. In reality, there’s a structured process behind the scenes that organizes existing information into a useful response. Let’s break down how these apps work.

The journey begins when you type in your question. The app uses a Small Language Model to extract key terms from your query—essentially identifying the main ideas or keywords. These keywords are then used to perform a regular search engine query on platforms like Google or Bing. The search results are processed by the app, which evaluates summaries of the top 100 hits using another Small Language Model to rank the 10 most relevant pages.

Next, the app crawls the content of those 10 pages, pulling in the most relevant material. This content is combined with your original question to create a detailed context. Finally, this context is sent to a Large Language Model, which generates a polished response that feels complete and confident—almost as if the app itself “knew” the answer.

Though this process may seem intelligent, it’s simply an optimized way of finding, filtering, and presenting information. The system doesn’t actually “know” anything; instead, it mimics understanding by repackaging existing knowledge.

These apps provide a valuable service by streamlining searches. Instead of sifting through endless links yourself, they consolidate information into a single, user-friendly response. This can save time and help with tasks such as brainstorming ideas, summarizing research, or finding information quickly.

Still, there are limitations. The quality of the response depends entirely on the data available and how the app ranks relevance. It’s always worth verifying critical information, as the system may miss nuances or context that you’d notice when manually researching.

Ultimately, language model-powered apps are useful tools that make information easier to access and process. They don’t provide true intelligence, but they can be an efficient way to search, summarize, and communicate ideas. Use them for what they’re good at, and stay thoughtful when evaluating results.

App developers are busy leveraging advanced tools, such as language models, to revolutionize the way they build domain-specific applications. These tools are helping improve coding, testing, documentation, security, and general software engineering processes, making app development faster, more efficient, and more reliable. Developers focus on creating scalable, secure, and technically robust applications that meet high standards and long-term needs. However, they often face challenges in deeply understanding the unique requirements and workflows of specific domains.

At the same time, domain experts are bypassing developers altogether to build their own applications. Equipped with deep knowledge of their fields and a clear understanding of what works and what they want, these professionals are creating solutions tuned specifically to their needs. They care about functionality and outcomes, not about how the underlying code is written. Thanks to the rise of user-friendly tools, such as no-code and low-code platforms, they can build apps quickly without relying on software development teams. While their solutions are practical and tailored, they can face limitations in areas like scalability, security, and technical refinement.

This divide between app developers and domain experts raises an important question. Who will take the lead in shaping domain-specific apps? Will app developers prevail with their technical precision and ability to scale solutions? Will domain experts win through their intimate knowledge of what matters in their industries? Or perhaps the future belongs to those who can do both—combining software engineering expertise with domain insight to bridge the gap.

The most promising path forward may lie in collaboration. When app developers and domain experts work together, they can create applications that combine technical robustness with tailored functionality. App developers can help build solid infrastructures, while domain experts provide the insights needed to create tools that truly solve practical problems. Another possibility is the emergence of hybrid creators—individuals skilled in both software development and domain-specific knowledge, capable of weaving together the strengths of both groups.

Rather than focusing on which group “wins,” the future of domain-specific apps is about leveraging the best of both worlds. Innovation will thrive when technical expertise and domain knowledge come together, ensuring apps can meet immediate needs while remaining scalable, secure, and impactful over time. The opportunities are immense, and success belongs to those willing to embrace collaboration or develop hybrid approaches that serve both technical and domain goals.

When designing apps, it’s easy to assume users interact with them in ideal conditions: perfect lighting, full attention, and comfortable surroundings. But the reality is very different. People use apps while commuting, sitting outdoors, or in challenging environments—and some users may even have physical or visual impairments that affect their experience. Testing apps under these less-than-optimal conditions is a practical way to uncover flaws in usability and ensure your design works for everyone.

One way to identify problem areas is to simulate real-world challenges while trying to use your app. Try using it without glasses if you typically wear them or wear overly strong glasses to distort your vision. Place your face close to the screen and see how comfortable it feels to interact with your design under extreme proximity. These experiments can reveal whether your app supports users with varying visual needs and highlight areas where readability or functionality needs improvement.

Lighting conditions can also drastically impact usability. Lower the brightness on your screen to mimic usage in dim environments or test your app outdoors in direct sunlight, where glare makes viewing difficult. Another option is to enable a black-and-white or grayscale filter on your device and evaluate whether your app’s key elements remain functional and clear.

It’s important to consider how adaptable your app is to font and zoom changes. Shrink the text size or zoom out to test for readability when accessing compact displays or small screens. Then reverse this by enlarging the font or zooming in to check whether users with impaired vision can comfortably navigate your app.

Movement and accessibility challenges can offer additional insights. Try using the app with just one finger on each hand or only one hand altogether. This can mimic how your app might be used during multitasking scenarios, like holding onto a bag or steering on public transport. These tests also help highlight pain points for users with disabilities or limited mobility.

By running your app through these conditions, you’ll learn what works and what doesn’t, uncover pain points, and identify areas for improvement. Small adjustments—such as improving text legibility, tweaking button placement, or ensuring responsiveness to varying screen settings—can have a big impact on how user-friendly your app feels.

Testing under challenging conditions is more than just a design exercise; it’s an opportunity to build something adaptable, usable, and inclusive. Push your app to its limits during development and prioritize accessibility from the start. If it still works when you’re struggling to use it, then you’re on the right track to creating an app that will work for everyone.

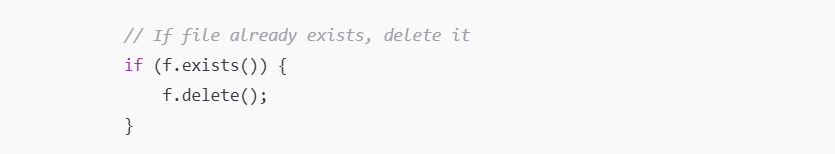

Writing code that is clear and easy to understand is a fundamental goal in software development. Readable code allows developers to quickly grasp its purpose, evaluate its quality, and make improvements. This becomes especially critical when working with generated code, where much of the effort goes into reading, understanding, and quality-assuring what is produced, rather than writing it from scratch.

Despite this, formatting features that aid comprehension are often absent in code. There is little support for techniques like using line breaks to separate logical sections or indentation to show hierarchy and flow. Code does not allow for visual tools like highlighting or underlining to emphasize important elements. It lacks paragraph-like divisions to separate ideas and often doesn’t include clear headings or structured sections that help navigate larger chunks of work.

These missing formatting features are easy to take for granted in other forms of writing, such as documents or articles. In those contexts, they play a significant role in organizing content and guiding the reader. Without similar support in code, developers are left to navigate dense, unstructured blocks of text, making the process of understanding much more tedious.

This problem is amplified with generated code. Developers working with generated code often spend the majority of their time trying to read and understand it. Poor formatting adds unnecessary complexity, slowing down tasks like debugging, quality assurance, and feature development. More structured formatting would make generated code easier to interpret, reducing the time and effort required to work with it effectively.

By applying principles of formatting to code—such as better use of spacing, structure, and visual cues—developers can make their work less about deciphering and more about solving problems. Thoughtful formatting isn’t just about aesthetics; it’s about creating an environment where developers can focus on what truly matters, whether they’re writing code or working with what has been generated.

Effectively training language models starts with high-quality, structured data. For a model to truly learn and apply knowledge, it needs consistent, reliable data that forms the foundation of its understanding. Quality data enables the model to build connections and recognize how different pieces of information fit together. Beyond raw data, providing the model with context is essential. One effective method is to use stories that frame the information in a way that makes it relatable and meaningful.

Creating good stories involves adding a wealth of related details while staying grounded in structured data. These stories help the model understand not just isolated facts but the broader context they belong to. For example, in the field of healthcare, structured data about treatments can be used to craft narratives about how specific symptoms led to particular diagnoses and treatments. These stories provide the model with insights into the interconnected nature of medical knowledge, teaching it how symptoms, processes, and outcomes relate to each other.

Such contextualized stories play an important role in helping the model learn how knowledge fits into larger systems and how it can be used. Instead of limiting itself to memorizing terms or concepts in isolation, the model gains a deeper understanding of how information interacts in real-world applications. This makes it better at adapting its responses to practical scenarios, like answering complex questions or solving problems where context is key.

When models are trained using detailed stories from structured data, particularly in specialized areas like healthcare, their ability to apply knowledge improves significantly. These stories not only enhance learning—they also prepare the model to make informed, context-aware decisions that are closer to how humans approach complex issues. Crafting narratives from structured data is a powerful way to unlock the full potential of language models and bring out their utility in a meaningful and impactful way.

Timing decisions are critical in both personal and professional contexts. One big question often arises: Should you move early, or is it better to wait? Both approaches have their advantages and drawbacks, and the right choice often depends on your goals, resources, and circumstances.

Moving early means acting before others. This approach can give you a competitive edge, as you’re the first to establish yourself or capitalize on an opportunity. Early movers often gain insights through direct experience and can position themselves as pioneers in their space. However, acting early comes with risks. There’s often little precedent to guide you, and being the first to act may lead to costly mistakes or uncertain outcomes if the timing isn’t right.

Waiting and moving later, on the other hand, is a more cautious approach. By observing others, you can learn from their successes and mistakes. This allows you to refine ideas and act strategically when the time feels right. Acting later also lets you evaluate whether an opportunity truly holds value before committing resources. However, waiting isn’t without risks either—you might miss out on key opportunities, or competitors could dominate while you’re still on the sidelines.

The decision to act early or late depends on evaluating the specific situation. Look at the potential value you can deliver and whether conditions are favorable for success. Consider the risks and rewards of both moving now and waiting. Acting early makes sense when innovation or speed is critical, but sometimes the right move is waiting for more concrete signs of success. Either way, focus on moving when you see a clear opportunity to create impact.

Timing is a balancing act, and there’s no single rule that works for every decision. What matters most is being intentional—whether you move first, wait, or adjust your timing later, your approach should align with your goals and the value you aim to achieve.